The Economist has released its annual international ranking of full-time MBA programs based on data collected during spring 2017. Two surveys were used: 1) The first was completed by schools with eligible programs which covered quantitative subjects and accounted for 80% of the ranking. 2) Current MBA students and a school’s most recent graduating MBA class completed a second qualitative survey which accounted for the remaining 20% of the rank.

The factors included:

• Percentage of graduates receiving a job offer within three months of graduation

• Student assessment of their program’s career services

• Quality of faculty

• Overall educational experience

• Post-MBA salary

• Percentage increase between pre- and post-MBA salary

• Breadth of alumni network

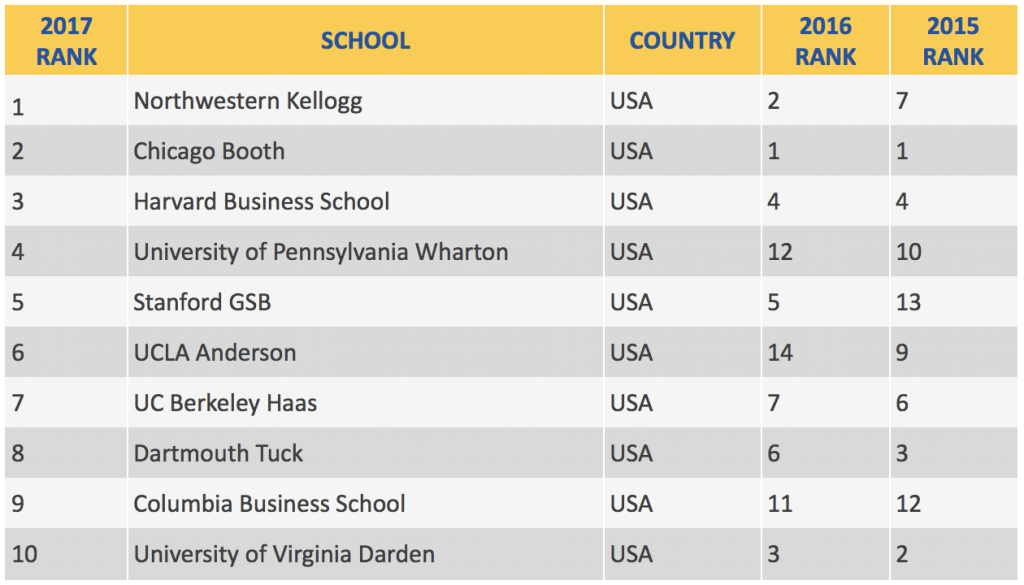

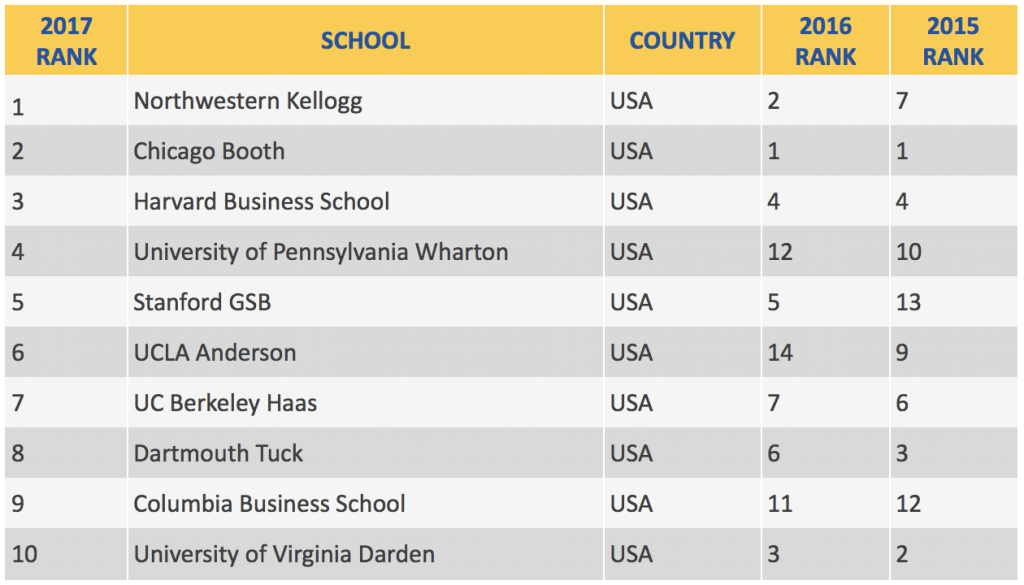

A total of 100 schools were ranked by The Economist. Here are the top 10 ranked schools:

OF NOTE:

This is the first year since at least 2007 (I could only find rankings back to 2008) that the top 10 schools are all from the US. In fact the first non-US school doesn’t make an appearance until HEC Paris at #15 (down from #9 in 2016 and #5 in 2015).

As usual, The Economist rankings are a rollercoaster ride for some schools. Wharton went from #12 to #4, which seems close to where it should be, and UCLA Anderson is currently at #6, having been #14 and #9 in 2016 and 2015, respectively. UVA Darden fell from #3 to #10.

Since schools in reality change slowly, the volatility of The Economist rankings, not to mention some of the outcomes (MIT is #19, below HEC Paris, Queensland, IESE, and Warwick and one notch above University of Florida), makes this one a head-scratcher. To use it at all, make sure you understand the factors that go into this ranking and compare them to your own criteria.

Need help choosing — and then applying to — the best MBA program for you? Get matched with one of our expert advisors for one-on-one consulting that will help you get accepted!

For 25 years, Accepted has helped business school applicants gain acceptance to top programs. Our outstanding team of MBA admissions consultants features former business school admissions directors and professional writers who have guided our clients to admission at top MBA, EMBA, and other graduate business programs worldwide including Harvard, Stanford, Wharton, Booth, INSEAD, London Business School, and many more. Want an MBA admissions expert to help you get Accepted? Click here to get in touch!

For 25 years, Accepted has helped business school applicants gain acceptance to top programs. Our outstanding team of MBA admissions consultants features former business school admissions directors and professional writers who have guided our clients to admission at top MBA, EMBA, and other graduate business programs worldwide including Harvard, Stanford, Wharton, Booth, INSEAD, London Business School, and many more. Want an MBA admissions expert to help you get Accepted? Click here to get in touch!

Related Resources:

• School-Specific Application Essay Tips for Top Business Schools

• Can You Get Into Your Dream School? – The MBA Selectivity Index

• Busting Two MBA Myths